Hugging Face CLI Practical Guide

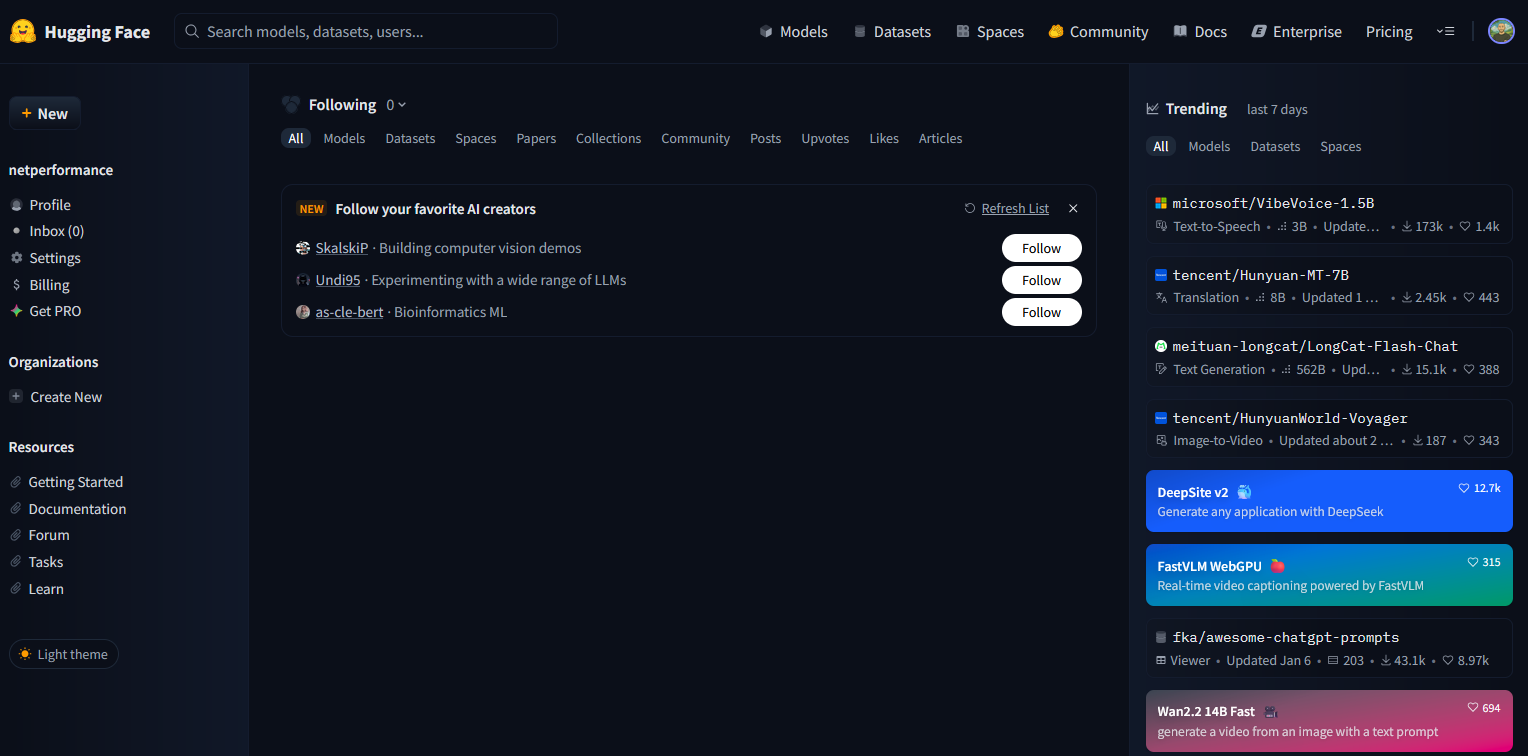

This guide is based on the Hugging Face CLI from version 0.34.4 onwards. In this version the old syntax huggingface-cli is replaced by the new command hf. I created this cheat sheet to have a concise and clear reference to the Hugging Face CLI. Instead of having to search the official documentation, I can find the most important commands, descriptions and examples here at a glance. What is Hugging Face? Hugging Face is a platform for machine learning. At its core is the Hugging Face Hub, a public and private repository for AI models, datasets and applications (Spaces). Developers can share, download and reuse models there. In addition to the hub, Hugging Face also offers libraries such as transformers, datasets and diffusers that make it easier to use AI models in practice. The hub thus serves both as a marketplace and as an infrastructure for collaborative development. ...