Introduction

Every day hundreds of new articles about AI and technology appear. No one has the time to manually sift through all of them. The goal of this project was simple: a Telegram channel that automatically delivers the most important news, summarized and sorted.

The result of this system is accessible to everyone. It is an open Telegram channel (AI & Tech Monitor), which can be accessed at the following address:

The system consists of the following main components

n8n (Workflow Automation)

A node-based platform for process automation. It serves as an orchestrator, connecting the APIs of the various services and executing the logic for data retrieval, processing and delivery.Baserow (Database)

A relational open-source database. It serves as the backend for managing the RSS sources and stores the articles as well as their processing status (e.g. “sent”) to prevent redundancies.Ollama (AI Inference)

A runtime environment for locally running language models. Llama 3.2 is used here to analyze and summarize the texts of the RSS feeds server-side without using external cloud APIs.Telegram (Frontend)

The output medium for end users. The final summaries are pushed to the defined channel via the Telegram Bot API.Docker (Infrastructure)

The application environment. All services (n8n, Baserow, Ollama) are run in isolated containers, minimizing dependencies on the host operating system.

How the n8n Workflow works

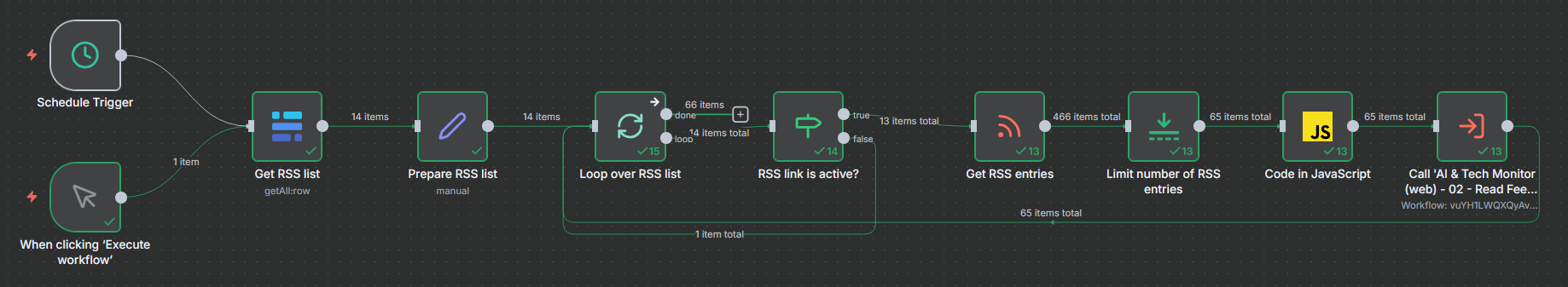

This system implements an automated pipeline for aggregating RSS content, its AI-supported summarization and publication via Telegram.

Workflow 01 - Read Feeds: This workflow acts as the central trigger and data collector of the system. It starts automatically every 30 minutes and first retrieves a list of RSS feeds to monitor from a Baserow database. After checking whether the respective feed links are marked as active, it downloads the current articles from these feeds and limits the number to a maximum of five entries per run. These raw article data are then structured and passed individually to the second workflow, which is invoked as a sub-workflow for content processing.

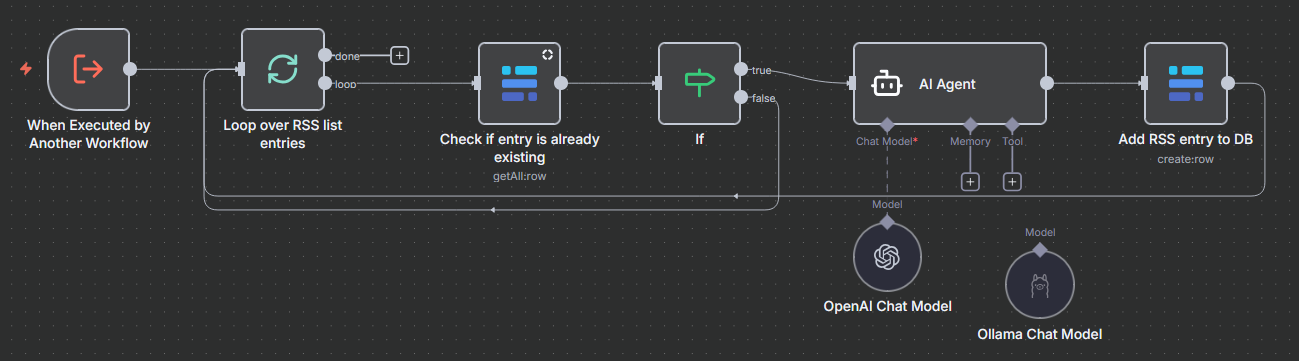

Workflow 02 - Read Feed-Entries: This workflow is responsible for the intelligent processing and storage and is fed directly by the first workflow with the article data. First, for each incoming article it checks in the Baserow database whether the link already exists to consistently exclude duplicates. If it is a new, previously unknown article, the workflow passes the content to an AI agent running via Ollama (model Llama 3) which generates a short summary of the text. The result, consisting of the title, link and generated summary, is stored within the database.

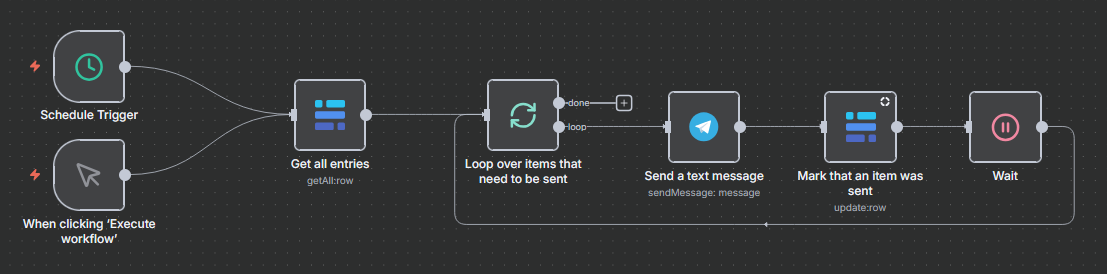

Workflow 03 - Telegram Message: The third workflow handles the publication of the processed content. It searches the Baserow database specifically for entries that have been fully processed but not yet marked as “sent”. For each of these pending items, the workflow sends a formatted message with the title, the AI summary and the link to a defined Telegram channel. Immediately after sending, it updates the entry’s status in the database to “sent” (True) to ensure that no message is published twice.

Since my primary focus here was testing the interaction between the tools, the current implementation only covers ‘sunny day cases’ and requires some work on error handling for long-term deployment.