Introduction: Why control over data determines AI success

Artificial intelligence does not emerge in a vacuum, it relies on data as fuel. A neural network without a broad and high-quality data foundation can neither process language nor identify objects or derive meaningful recommendations. For companies, this results in a clear consequence: whoever loses control over their own data leaves the crude oil of the digital economy to external platforms.

The concept of data sovereignty describes exactly this factual and legal dominion over one’s own data holdings and goes far beyond classical data protection. While data protection primarily aims to safeguard individuals’ fundamental rights by protecting their information, data sovereignty represents a strategic and economic question. It is about who owns data and who is allowed to use it in which way. This determines whether data becomes a valuable competitive advantage or flows unnoticed into the value chains of others.

Why data is crucial for the functioning of AI

The relevance of data sovereignty becomes particularly apparent when using AI, as the performance of AI directly depends on the data available. AI models learn from a multitude of examples which patterns are hidden in reality and apply these insights to new scenarios. The quantity and especially the quality of the training data are of central importance. Large generative systems, such as language models, require billions of parameters to convincingly generate natural language. For such models, the required amount of data depends on the model size, the specific task and the diversity of the content. Insufficient or low-quality data cannot be compensated by simply increasing the quantity. On the contrary, they lead to the amplification of errors. For companies, this means that not only access to large volumes of data matters, but also the ability to use relevant, cleaned and consistent data sets specifically for the development of AI. Whoever loses control at this point risks inaccurate results, rising costs and dependence on external providers.

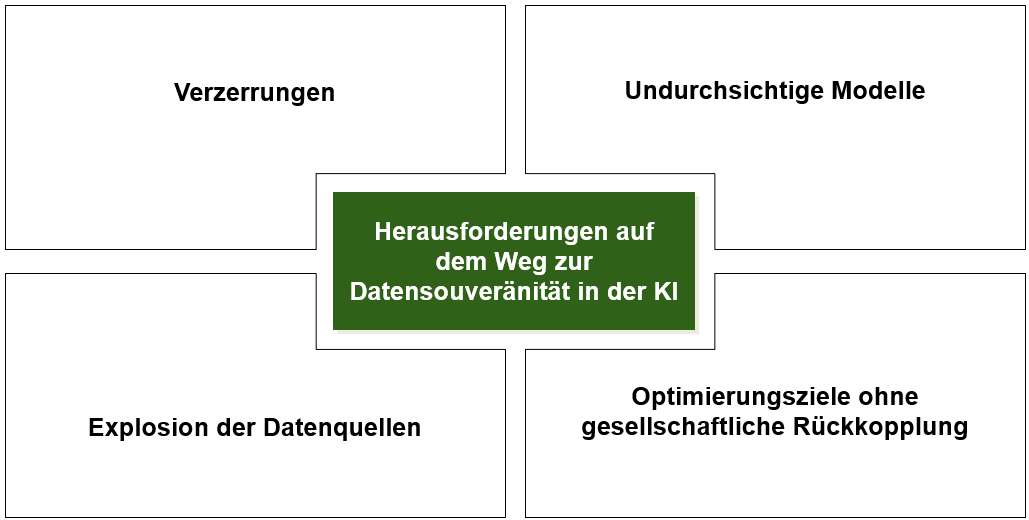

Challenges in securing data sovereignty

Establishing data sovereignty for AI is a process that requires much more than just access to large volumes of data. The path to sovereign AI systems is paved with numerous challenges. The following difficulties often arise and should be addressed as early as possible.

Biases: Bias can arise in every phase of AI development. Often, existing societal inequalities in the data are accepted as given and reproduced by the model. During data collection and annotation, personal or cultural prejudices can be introduced. If certain population groups are underrepresented in the training data, the model primarily learns the patterns of the majority. Similarly, mathematical optimization can lead to minorities being weighted less, which results in less accurate predictions or recommendations for these groups.

Opaque models: Many AI models appear as opaque systems, so-called black boxes. Although their calculations are theoretically traceable, the immense number of parameters and the complexity of interactions make it practically impossible for people to fully understand the exact decision-making process. Without supplementary methods for explainability, it is difficult to determine which factors influenced a decision and whether it was fair. A higher degree of transparency promotes trust and allows control by users and supervisory authorities.

Optimization objectives without social engagement: When an AI is trained solely to achieve quickly and efficiently measurable goals, for example to persuade as many users as possible to purchase a product, it can deliver aggressive, personalized advertising to particularly vulnerable groups. This is done to maximize the purchase probability, even if such an approach is ethically questionable.

The growing number of data sources: Companies nowadays generate data across numerous platforms, for example in cloud services, software-as-a-service applications and social media. This data is often stored in different locations and not consolidated into a central system. A so-called data map provides an overview showing where in the company data is generated, who uses it and whether it is modified on its way. If data is neither understood nor findable or controllable, it turns from an advantage into a potential threat.

The data economy is flourishing

Despite the aforementioned challenges in dealing with data, the market for artificial intelligence is growing very rapidly. Companies invest large sums in the management, preparation and quality assurance of data to make it available, usable and secure.

According to a report by Fortune Business Insights, the global AI market amounted to approximately 233.46 billion US dollars in 2024 and is expected to rise to 1,771 billion US dollars by 2032. At the same time, the markets for data management, data labeling and training data sets are also expanding. The market for AI data management had a volume of 25.50 billion US dollars in 2023 and is expected to grow to over 104.00 billion US dollars by 2030. Services for data annotation, which are essential for supervised learning, reached a size of 18.60 billion US dollars in 2024 and are likely to increase to 57.60 billion US dollars by 2030. Demand for synthetic data is also rising. A market of 0.51 billion US dollars in 2025 is expected to grow to 2.67 billion US dollars by 2030, as companies need anonymized and realistic data sets for privacy-compliant training purposes. These figures demonstrate that data is the new foundation of the economy. Companies are investing billions in data preparation, annotation and quality assurance. At the same time, the availability of high-quality data is a critical factor for the performance of AI systems.

Growth markets: Data management, annotation and synthetic data

The AI industry is currently undergoing a phase of strong consolidation. Large platforms are acquiring specialized data companies to secure access to high-quality data sets. A prominent example of this is Microsoft’s acquisition of the speech and speech recognition company Nuance for 19.7 billion US dollars.

At the same time, the market for synthetic data is experiencing a boom. Artificial data sets are created by generative models that exhibit the same statistical characteristics as real data but contain no personal information. Such data makes it possible to learn confidential or rare patterns without disclosing the original data. These data protect privacy, are structurally identical to the template and contain no personal information. This allows the secure development and testing of AI solutions.

The competition for qualified professionals in the field of AI has evolved into an intensive talent search. Large technology corporations are not only securing companies with valuable data sets but also competing for the best experts. According to media reports, Microsoft has poached professionals from Apple and entices them with salaries in the millions and extensive stock packages. The amounts offered are reminiscent of transfer fees in professional sports, as specialists in language processing, machine learning and computer vision are particularly sought after. Such recruitments are accompanied by long-term bonus programs and high research budgets.

Which data is considered worthy of protection?

With the growth of the data economy, the question increasingly arises as to which data is particularly critical for companies and therefore requires special protection. Not all data has the same level of criticality. The following are considered worthy of protection:

Personal data: Information that can be associated with a specific person, such as name, address, biometric characteristics, health data or financial information.

Trade secrets and research data: Product formulas, algorithms, market studies or research results whose disclosure would diminish the competitive advantage.

Sensor and production data: Data from machines can provide insights into production processes and are therefore worth protecting.

Combined data: By merging different data sources, seemingly innocuous information can allow conclusions about consumer behavior or political beliefs. Therefore, companies should always analyze which inferences could be drawn from their data.

For true data sovereignty, it is not enough to merely possess large volumes of data. What matters is whether this data is relevant, consistent and usable for the respective AI application. An unfiltered mass of data can, in the worst case, even degrade the quality of the model. Value is only created when data is deliberately selected, structured and put into a meaningful context. This is exactly where the idea of Smart Data comes in.

Smart Data instead of Big Data: quality is more important than quantity

Smart Data represents a conscious handling of information. The focus is not on sheer quantity but on the relevance and quality of the data. For artificial intelligence, this means that data sets are tailored, cleaned and enriched specifically for the task at hand. In this way, data foundations emerge that are meaningful and efficiently usable.

While Big Data is often used as an umbrella term for large and heterogeneous data volumes, Smart Data focuses on targeted selection, clean structure and clear assignment. For example, a small but carefully annotated data set can train a language model more effectively than terabytes of unstructured and irrelevant content.

The advantage of Smart Data lies in its clear focus on a goal. Data is filtered so that it contains only the information that is relevant to a specific AI task. It is consistent, up-to-date and traceable, which not only enhances the model’s performance but also facilitates compliance with regulatory and data protection requirements. For companies, this means: those who master Smart Data achieve more accurate results, save computing resources and at the same time retain control over their most valuable data.

For Smart Data to become a strategic advantage, technical concepts are required that guarantee companies full control over their data, even when it is processed or shared for AI use.

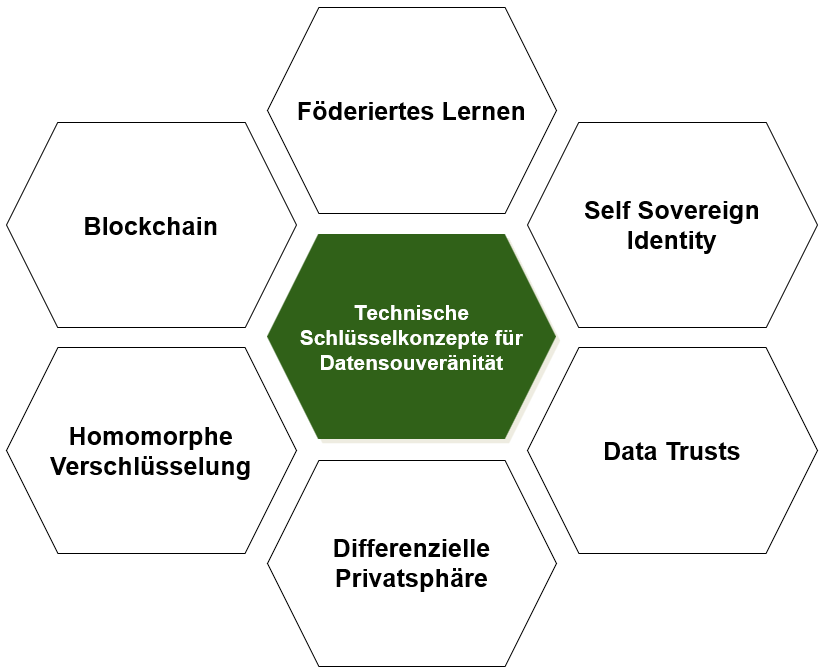

Technical key concepts for ensuring data sovereignty

Technical methods for strengthening data sovereignty are essential. They enable the use of AI, technical approaches for greater data sovereignty are of great importance because they allow the use of AI systems without relinquishing control over sensitive data. AI requires large amounts of information to function reliably, yet much of this information is confidential or subject to strict data protection regulations. With the following methods, this data can be used securely without exposing it unprotected.

Föderiertes Lernen: In this approach, data is not transferred to a central server. Instead of transferring data, computations take place locally on the data available. Only the resulting, updated model parameters are then forwarded. In this way, for example, multiple clinics can jointly optimize their diagnostic systems without exchanging patient data.

Self Sovereign Identity: This concept means that users manage their digital identity themselves in their own electronic wallet, a so-called wallet. They decide autonomously which information they want to share. On an online platform, a user could thus prove they are of legal age without disclosing their full name or address.

Data Trusts: A data trust is a fiduciary organization in which data owners delegate the management of their data to an independent entity that acts in the interest of all stakeholders. Multiple hospitals could thereby combine patient data in anonymized form to jointly promote medical research. The trustee defines who may access which data and ensures transparency and fair use.

Differential privacy: In this method, statistical noise is deliberately added to the data. This enables analyses to be carried out without identifying individual persons. One use case is the evaluation of movement data from a fitness app to identify general trends without storing the exact routes of individual users.

Homomorphic encryption: This method allows computations to be performed on encrypted data without decrypting it first. A practical example is a bank that assesses a customer’s creditworthiness. The customer transmits their income and expenditure data in encrypted form. The bank then performs specific mathematical operations directly on this encrypted data to determine, for example, the ratio of income to expenditure. The result of this calculation remains encrypted and is only decrypted by the customer. In this way, the bank can make a lending decision without ever seeing the exact amounts in plain text.

Blockchain: The blockchain acts as a tamper-proof, decentralized ledger in which data accesses and transactions are permanently recorded. This makes it possible at any time to trace who accessed which data and when. In the food industry, this can be used to document the entire supply chain and verify the origin of a product seamlessly.

These technologies demonstrate that data protection and the use of AI are not mutually exclusive. In the right combination, they enable responsible data use without jeopardizing sovereignty over the data. However, technical solutions alone are not sufficient. To ensure responsible handling of data and AI in a binding manner, clear legal frameworks are necessary.

Why clear rules for artificial intelligence are essential

Regulations in the field of artificial intelligence are crucial for steering technological progress onto safe and responsible paths. Without clear guidelines, AI systems could be deployed that deliberately manipulate people, discriminate or significantly violate their privacy. A negative example is the criticism of Elon Musk’s AI “Grok”, which according to reports was modified to reflect Musk’s personal views more strongly and to favor his position on controversial topics. However, there are also positive examples for the protection of citizens’ rights: Denmark is developing a law that grants its citizens the copyright to their own face, voice and other personal characteristics. This is intended to prevent images or audio recordings from being used for AI training and deepfakes without consent. In a world without such rules, there would be a risk that economic interests and short-term efficiency gains would take precedence over the protection of fundamental rights and societal values. It is precisely for this reason that regulations such as the European AI Act, the General Data Protection Regulation (GDPR) and the ISO/IEC 42001 standard were created. They aim to promote innovation, minimize risks, prevent abuse and strengthen public trust in AI.

Each of these regulations plays a crucial role in maintaining the balance between technological advancement and the protection of fundamental values. Together, they create a comprehensive framework that guides the development and use of AI in an orderly and responsible manner.

The European AI Act: In 2024, the European Union adopted the world’s first comprehensive legal framework for AI with the AI Act. The regulation classifies AI systems by risk levels. Prohibited applications, such as social scoring systems or biometric real-time monitoring in public spaces, are banned. High-risk systems used in critical infrastructures, education, human resources or the justice system are subject to strict assessments and must be registered. Generative models such as ChatGPT are subject to transparency obligations. Providers must label machine-generated content and disclose which copyrighted data was used for training. The AI Act requires that AI systems must be safe, transparent, explainable, non-discriminatory and environmentally friendly.

The General Data Protection Regulation (GDPR): The GDPR establishes seven fundamental principles for any data processing. It obliges organizations to process data lawfully and transparently, define the purpose of processing clearly, collect only necessary data and keep it accurate. In addition, data may only be stored for as long as necessary, must be protected by appropriate security measures, and compliance with all these principles must be demonstrable. The regulation applies globally to all companies that process data of EU citizens.

ISO/IEC 42001: This standard is the first international standard for the certification of AI management systems. It was published in 2023 and covers the entire lifecycle of an AI system, from conception through development and operation to decommissioning. The standard requires companies to identify and manage risks, establish clear responsibilities for AI management and continuously improve processes. Key focuses are on transparency, accountability, bias detection, security and data protection. ISO 42001 complements existing standards such as ISO 27001 for information security and provides a structured framework for meeting legal requirements.

Strategic prerequisites for data sovereignty

Anyone who wants to secure control over their data needs more than just technology. It requires a combination of clear responsibilities, organizational structures and the right technological tools. The basis for this is a shared understanding throughout the company of which data is truly valuable, where it originates and what it is to be used for. The starting point is always the examination of the legal basis for each data processing and its transparent documentation. Equally important is not collecting data aimlessly, but focusing the collection on clearly defined objectives. Instead of relying on sheer volume, it is worthwhile to focus on data that precisely fits the question at hand and provides an unadulterated picture of reality. This overarching strategy relies on several essential pillars that cover both organizational and procedural aspects and together ensure data sovereignty:

Roles and responsibilities: Data sovereignty requires a clear distribution of tasks. Responsibilities for collecting, maintaining, securing and strategically using data must be clearly defined, for example through roles such as data stewards or officers for trustworthy AI.

Context and discoverability: A data set unfolds its full value only when its context is understood. This includes information about when it was captured, how it was generated and for what purpose. Such metadata significantly facilitate the discovery and reuse of data.

Access and protection: Not every employee needs access to all information. A tiered access management protects data from accidental changes or unauthorized disclosure.

Quality and consistency: Faulty or outdated data leads to incorrect conclusions, both in AI modeling and business reports. Regular data quality checks are therefore essential. Equally important is traceability of changes to build trust in data-driven decisions.

Efficiency through relevance: Many data sets contain information irrelevant to a specific analysis. Targeted filtering removes distracting features, which enhances informativeness and reduces computational effort.

Explainability and fairness: Companies must be able to explain how an AI arrives at its results and whether it treats all groups fairly. This includes actively identifying and correcting biases in the training data. Fair systems are the result of deliberate design.

Strategic partnerships: In times of cloud services and joint data projects, it is crucial to structure collaborations so that sensitive data does not flow out uncontrolled. Technologies such as federated learning or data trusts enable joint projects without having to hand over raw data.

Tool selection: The tools used must fit the company’s own data landscape. What matters is not the amount of technology, but its suitability for the company’s own processes and objectives.

Conclusion: Data sovereignty as a strategic competitive advantage

Control over data is crucial for the success of artificial intelligence. Without high-quality data, every model remains unreliable, without control over this data companies lose their ability to shape outcomes and their value creation potential, and without legal as well as organizational anchoring, opportunities remain untapped. Anyone who wants to use AI responsibly must therefore consider technical, legal and strategic aspects together.

It becomes clear that for a high-performing AI, it is not the mass but the targeted quality of the data that makes the difference. At the same time, a market worth billions is emerging around data preparation, annotation and synthetic data sets, which brings both opportunities and new risks. Companies face the challenge of not only building data holdings but also protecting, structuring and specifically using them in terms of Smart Data.

Technical concepts such as federated learning, homomorphic encryption or differential privacy make it possible to integrate sensitive information into AI applications without losing control over it. But technology alone is not enough. Only clear regulations such as the AI Act, the GDPR or ISO standards create the framework in which innovation and responsibility go hand in hand.

This makes data sovereignty a central leadership task. It requires legal foundations, lived responsibility, technological competence and a corporate culture that views data not as a by-product but as fundamental capital. Those who invest early in these capabilities strengthen the trust of customers and partners, reduce risks, increase their own independence and unlock new potential for value creation.

Data sovereignty is thus not a peripheral aspect of digitization, but a strategic core factor in the global competition for supremacy in the field of AI.