This guide is based on the Hugging Face CLI from version 0.34.4 onwards. In this version the old syntax huggingface-cli is replaced by the new command hf. I created this cheat sheet to have a concise and clear reference to the Hugging Face CLI. Instead of having to search the official documentation, I can find the most important commands, descriptions and examples here at a glance.

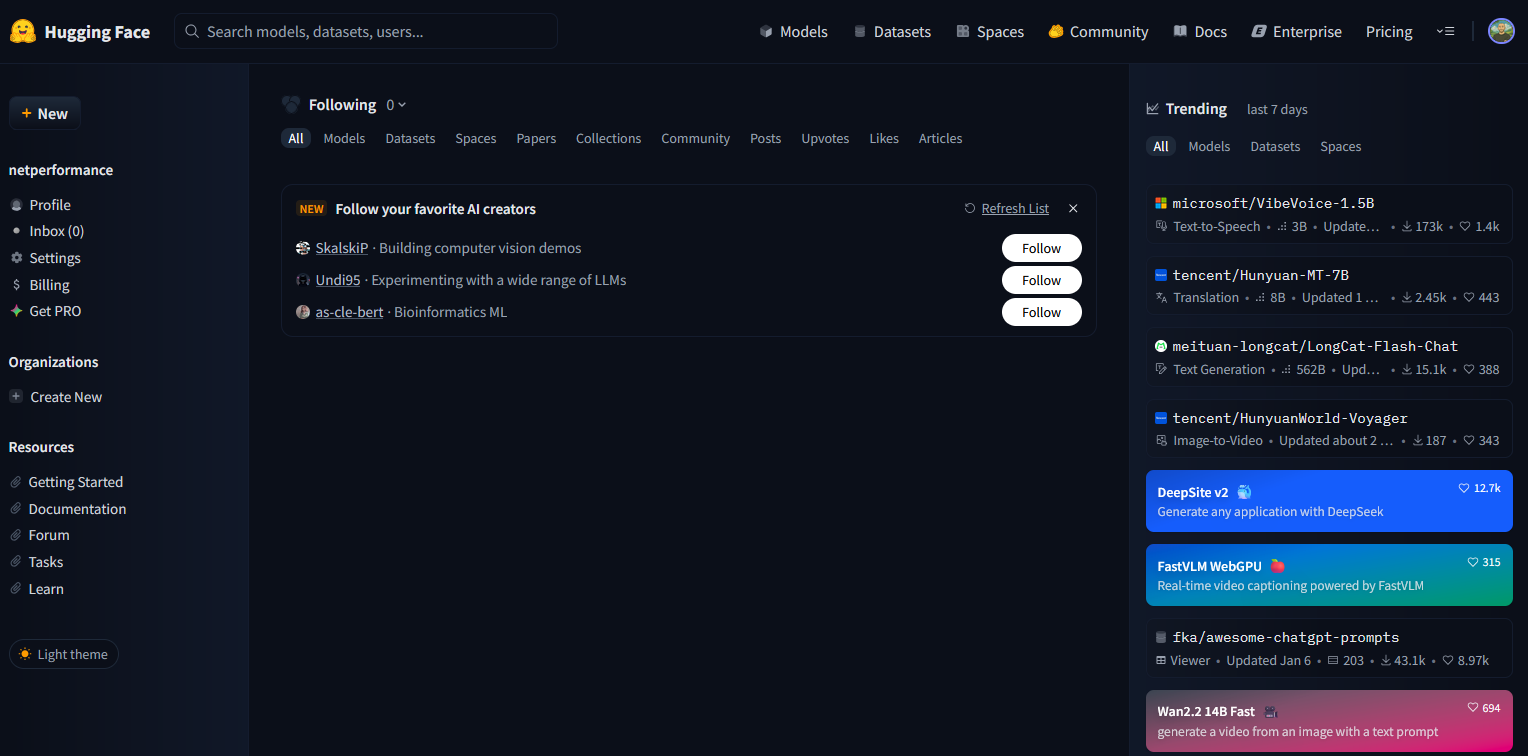

What is Hugging Face?

Hugging Face is a platform for machine learning. At its core is the Hugging Face Hub, a public and private repository for AI models, datasets and applications (Spaces). Developers can share, download and reuse models there. In addition to the hub, Hugging Face also offers libraries such as transformers, datasets and diffusers that make it easier to use AI models in practice. The hub thus serves both as a marketplace and as an infrastructure for collaborative development.

What is the Hugging Face CLI?

The Hugging Face CLI is a command-line tool that lets you access the Hugging Face Hub directly. You use it to manage authentication, download, upload and the management of repositories for models, datasets and Spaces. The CLI uses the Python library huggingface_hub internally. Everything you can do with this library can in many cases also be done scriptable with the CLI.

| hf command | Description | Usage example |

|---|---|---|

| hf auth login | Login to Hugging Face with a personal access token. The command stores the token locally in the cache and (optionally) in the Git credential manager. | - |

| hf auth whoami | Shows the currently authenticated account. | - |

| hf auth logout | Logout. Removes the token from local storage. | - |

| hf cache scan | Shows the models, datasets, etc. that are cached locally, including sizes, file counts and paths. | - |

| hf cache delete | Offers an interactive (TUI) or file-selection option to delete cached versions. | - |

| hf download | Downloads files or repositories from the hub and stores them in the local cache. | hf download meta-llama/Llama-3.1-8B-Instruct –local-dir ./llama3-8b |

| hf upload | hf upload uploads a single file to a repository you created on the Hugging Face Hub. This repository belongs either to your user account or to an organization you are a member of. You decide whether it is public or private. If the upload is interrupted, you have to upload the file again. hf upload is intended for small, single files or a few files. | Example repository: https://huggingface.co/ |

| hf upload-large-folder | Uploads entire directories; supports resuming after interruption. | hf upload-large-folder –repo-id [your-username]/[my-repository-name] [folder_path] e.g. hf upload-large-folder –repo-id aaron/my-model ./training_output |

| hf lfs-multipart-upload | With this command you can reliably upload very large files, for example model weights or training checkpoints in the tens of gigabytes range. The file is split locally into smaller data blocks. Each block is transferred individually to the Hugging Face repository on the hub and automatically reassembled there into a complete file. The advantage of this technique is that a failed upload does not have to restart from scratch. Already uploaded blocks remain in the repository. On retry the tool checks which blocks are missing and only uploads those. In this way even very large files can be loaded into a repository stably and without unnecessary repetitions. | hf lfs-multipart-upload –repo-id [your-username]/[my-repository-name] [file_path] e.g. hf lfs-multipart-upload –repo-id aaron/my-model ./model.safetensors |

| hf lfs-enable-largefiles | If you work on a repository with Git and Git LFS on the Hugging Face Hub, all files, including the large model weights, are present in your local folder. When pushing Git distinguishes: small files like a config.json go directly into the Git history, while large files like model.safetensors are replaced by Git LFS. In the history only a small pointer remains, the actual data is stored separately in the LFS storage of Hugging Face. When cloning or using hf download the client first fetches the pointers and then downloads the full files from this LFS storage. GitHub works on the same principle. Large files are also offloaded via Git LFS there. By default the limit is 100 MB per file. Anything above that cannot be pushed directly, which is why Git LFS is required. Hugging Face is set up similarly but optimized for large models. Files in the multi-gigabyte range are common there and Git LFS is the intended solution. The command hf lfs-enable-largefiles sets up a cloned Hugging Face repository so that large files are automatically managed with Git LFS. Git LFS ensures that large files do not bloat the Git history and thus prevents failed pushes. You only need this command if you actually work with Git in the repository, i.e., clone, commit and push. If you upload files exclusively with hf upload or hf upload-large-folder it is not necessary. | In the cloned repo: hf lfs-enable-largefiles |

| hf repo create | Creates a new repository for model, dataset, Space etc | hf repo create [your-username]/[my-repository-name] –type model e.g. hf repo create aaron/my-model –type model |

| hf repo-files | Shows the structure (files) of a repository. | hf repo-files [your-username]/[my-repository-name] e.g. hf repo-files aaron/my-model |

| hf env | hf env provides a detailed overview of your local working environment. This includes the installed version of huggingface_hub, operating system, Python version, libraries used such as PyTorch or TensorFlow, and the locations of cache and tokens. The output is mainly intended for diagnostic purposes, for example when filing an issue in the Hugging Face GitHub repository or checking your own configuration. | - |

| hf version | Shows the installed version of hf (i.e., huggingface_hub) | - |

| hf jobs | Job management on the hub: run scripts or Docker containers on Hugging Face (in addition to the CLI) | hf jobs run –flavor t4-small ubuntu nvidia-smi |