The following podcast was generated by AI.

Introduction

In modern industrial manufacturing, predictive maintenance, or Predictive Maintenance (PM), has become a decisive competitive factor. Thanks to rapid advances in Artificial Intelligence (AI), companies can not only monitor the condition of their equipment but also precisely predict its future failure behavior.

This technological revolution is built on three inseparable pillars. Firstly, the data serves as the raw material. In the next step, it is transformed into valuable insights through anomaly detection and model training. Finally, AI uses these insights to make reliable predictions. Understanding these interrelationships is the first and most important step on the path to intelligent maintenance.

Predictive Maintenance is not possible exclusively with time series data but greatly benefits from the combination of various data categories to obtain a complete picture of machine health.

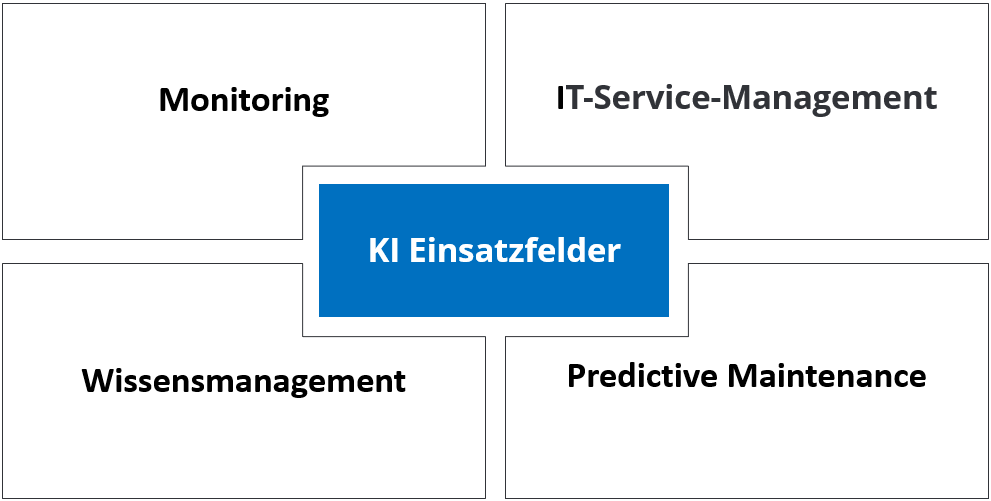

Application Areas of Artificial Intelligence in IT Service and Predictive Maintenance

Artificial Intelligence has evolved in recent years from a research topic to a practical tool. Especially in IT Service and predictive maintenance, it opens up new possibilities to make processes more efficient, accurate, and proactive. Instead of merely reacting to disruptions, companies can use AI to detect anomalies early, process tickets automatically, deliver knowledge dynamically, and accurately forecast the condition of machines or IT systems.

The following examples show how AI can be applied in various areas, from monitoring to IT Service and knowledge management to Predictive Maintenance. They illustrate the advantages that arise when data, pattern recognition, and automation are combined intelligently.

Monitoring

Root-Cause Analysis

Example: Multiple servers fail simultaneously. AI recognizes that not all servers are randomly failing at the same time but that they run through the same switch.

How: AI correlates topology data, e.g. Configuration Management Database (CMDB) or network diagrams, with events. It recognizes patterns in which a parent device, e.g. a switch, is consistently involved and suggests that as the cause.

Predictive Capacity Management

Example: AI predicts that a file server’s storage usage will reach 95 percent in three weeks.

How: Time series models, e.g. AutoRegressive Integrated Moving Average (ARIMA) or Long Short-Term Memory (LSTM), extrapolate usage trends into the future.

Self-Learning Alarm Correlation

Example: Instead of 500 alarms for failed VMs, AI issues a single aggregated alarm “Virtualization host down.”

How: Pattern recognition clusters simultaneously occurring alarms and learns which often occur together in order to consolidate them.

Sensor Fusion

Example: A production robot sends temperature, vibration, and power consumption data. AI detects an impending motor overheating.

How: A model combines the sensor data and learns which value combinations lead to failures.

Adaptive Maintenance Cycles

Example: Instead of replacing the oil filter every six months as scheduled, AI detects that for Machine A a change is only needed after nine months.

How: AI links real usage data with failure statistics and dynamically adjusts maintenance intervals.

IT Service Management

Automatic Ticket Prioritization by Business Impact

Example: An outage in the online shop is immediately classified as “critical.”

How: AI evaluates dependencies between systems in the Configuration Management Database (CMDB) and business functions and automatically assigns the correct priority.

Ticket Volume Forecasting

Example: After a software update, AI forecasts a forty percent increase in tickets.

How: AI analyzes historical ticket volumes in relation to updates or releases and builds a forecasting model.

Agent Assist Bots

Example: While a user reports printer issues over the phone, AI suggests appropriate solutions to the support agent.

How: AI converts speech to text, searches knowledge bases for relevant solutions, and displays them in the support tool.

Voice and Chat Interfaces

Example: An employee says, “My laptop won’t start.” A voice bot automatically creates a ticket.

How: Speech-to-text + intent recognition (NLP). AI understands the request, maps it to a ticket type, and populates the fields.

AI-Powered Change Risk Assessment

Example: Before a database update, AI warns that similar changes have caused performance issues.

How: AI compares planned changes with historical changes and their impacts to compute a risk profile.

Knowledge Management

Automatic Knowledge Generation

Example: After resolving a network issue, AI creates an article.

How: AI (NLP) extracts resolution steps from the ticket and formats them as a knowledge document.

Semantic Search

Example: A support agent asks, “How do I fix printer issues after a Windows update?” AI finds articles containing “Windows Patch” and “Printer Error.”

How: Embeddings convert queries and documents into vectors so that semantic similarity is used instead of exact terms.

Contextual Recommendations

Example: A user repeatedly reports WLAN issues. AI automatically displays all relevant WLAN solutions.

How: The system links user history and ticket contents with similar cases.

Multilingual Support

Example: A French user searches for “Erreur de connexion VPN.” AI delivers the German solution in French.

How: Machine translation is integrated into the knowledge search.

Video Analysis

Example: A user uploads a video showing a machine running roughly. AI detects imbalance.

How: Computer vision analyzes motion patterns in the video and matches them with training data of known faults.

Predictive Maintenance

Wear Detection via Acoustics

Example: AI detects an incipient bearing fault from turbine noises.

How: Audio signals are decomposed into frequency spectra. ML models detect deviations from healthy sound patterns.

Vibration Analysis

Example: AI predicts that a motor bearing will fail in 50 operating hours.

How: Sensor data are compared with known failure patterns, and models estimate the remaining useful life.

Image-Based Condition Monitoring

Example: A camera detects small cracks in a conveyor belt.

How: Computer vision compares real-time images with reference images and identifies deviations.

Remaining Useful Life (RUL) Forecast

Example: AI calculates that a battery has 120 charge cycles remaining.

How: Regression models link historical charge cycles and current sensor values with known aging profiles.

Energy Efficiency Optimization

Example: AI recommends using Compressor B instead of A because A consumes more energy under partial load.

How: Reinforcement learning or optimization algorithms compare energy consumption patterns and simulate alternatives.

Data Categories at a Glance

To understand the relevance of the data and their differences, it helps to categorize them and illustrate their application directly on an industrial plant.

Time Series

Time series data are a sequence of measurements collected in chronological order over a specific time period. They are the most important data category in Predictive Maintenance because they depict the dynamic development of equipment and show how a condition changes over time. In industry, sensors on a manufacturing machine continuously measure vibrations, temperatures, and pressures. These data streams form a time series. A sudden increase in vibrations may indicate a loose screw, while a gradual, steady temperature rise could signal bearing wear.

Operational Data

Operational data provide context for time series data. They describe the operating conditions, i.e. the specific circumstances under which the machine is running. Data on run time, production volume, or current load of a machine are typical operational data. They help interpret sensor measurements correctly. A high vibration value is normal under full load but an alarm signal at idle. A Predictive Maintenance model needs this information to make informed decisions.

Static Data

Static data are information that does not change over time. They provide the fundamental context for dynamic data. In industry, these include serial number, year of manufacture, or technical specifications of a machine. This data enables the AI model to be refined for different machine types. A prediction for an old, heavily used plant differs from one for a new machine.

Maintenance and Failure History

Maintenance and failure history encompass information about past events such as repairs, parts replacements, and documented failures. They are essential for training AI models. A maintenance log documenting when a bearing was replaced and what type of defect occurred is an important historical datum. This knowledge is crucial for identifying the patterns in time series data that precede a failure.

By combining all these data categories, AI-powered Predictive Maintenance systems can not only detect when something is going wrong but also why. The ability to understand these interrelationships is the first and most important step on the path to intelligent maintenance.

Anomaly Detection: Searching for the Unusual

Once data has been collected, the first and most important step is to identify atypical behaviors. An anomaly (also called an outlier or deviation) describes a pattern or data point that significantly differs from the majority of the data. This deviation can indicate an unusual, potentially critical event. It is not merely a faulty measurement but a signal pointing to an emerging change, defect, or impending failure.

For AI, understanding anomalies is essential. Instead of relying solely on predefined thresholds, an intelligent system learns the “normal” behavior of the machine. This way, subtle but critical deviations that fall below usual alarm levels can be detected early. A sudden spike in vibrations or an unexpected temperature drop are examples of such deviations that may indicate beginning wear or an imminent defect. Specialized machine learning techniques are well suited for this.

An Isolation Forest model, for example, isolates anomalies in a dataset by treating them as outliers that require only a few “questions” to separate them from the rest of the data. An Autoencoder, on the other hand, is a neural network trained to reconstruct normal data. When confronted with an anomaly, it cannot correctly reproduce it, resulting in a high reconstruction error and thus a clear signal of a deviation.

Feature Engineering: Preparing Data for AI

Raw sensor data are often too complex for an AI model to process directly. This is where feature engineering comes in. It is the process of extracting meaningful features from raw time series data that the AI model can better understand.

Consider hourly temperature readings. An AI model that only sees these raw values may not recognize patterns. An experienced data analyst, however, can derive new, more relevant features from this data, such as the standard deviation of temperature over the last 24 hours to indicate fluctuation, the maximum or minimum temperature of the previous day, or the slope of the temperature trend over the last week to identify a gradual increase.

These derived features are far more informative for AI than raw values. By incorporating domain knowledge about the machine and its operation into these features, the foundation for a powerful and accurate predictive model is established.

In summary, converting raw sensor data into actionable insights for the machine is a two-step process: First, anomalies are detected to signal potential hazards. Then the data are prepared through feature engineering so that AI can effectively use them. Only with these steps does the collected data treasure become usable by the “brain” of the system, the AI model.

How Does Feature Engineering Work?

Feature engineering is the process of deriving new, relevant features from raw sensor data. An AI model that only sees hourly temperature values may struggle to recognize patterns because it lacks context. An experienced data analyst, however, can extract new information from this data. Some of the key methods for extracting features from sensor data include statistical features. These involve calculating simple statistical values over a specific time window, such as the mean, standard deviation, minimum, or maximum. For example, the standard deviation of vibration data over the last 10 seconds can indicate system fluctuations. Next are time-based features, where features reflecting temporal dynamics are extracted. These include moving averages, rate of change, or the duration a measurement stayed above a certain threshold. An advanced method is frequency-based features, especially used for vibration data. Using the Fast Fourier Transform (FFT), time series data are transformed into the frequency domain. This allows the detection of specific frequencies that indicate certain defects. An increased amplitude at a particular frequency may signal a bearing fault long before the vibrations become noticeable in the time domain.

Human vs. Machine: Who Does Feature Engineering?

Feature engineering is a blend of art and science. The question of whether it is done by humans or AI cannot be answered with a simple “either/or.” Traditionally, feature engineering is performed manually by data scientists and domain experts, such as mechanical engineers. These experts know the physical laws of the machine and understand which parameters indicate a defect. Their expertise is indispensable for identifying the most relevant features, often resulting in the best predictive models. In recent years, methods have also emerged where AI extracts features itself. Deep learning models, particularly neural networks like Autoencoders or LSTMs, can learn directly from raw data which features are relevant for prediction. This approach reduces manual effort and is especially useful for very complex data where human intuition reaches its limits. The most effective approach is often a hybrid one: human expertise provides direction and identifies basic features, while AI automatically learns the finer, more complex patterns.

Handing Over to the Model

Let’s imagine a pump that is being monitored. Sensor data are collected hourly and the relevant features are extracted. These features are then compiled into a table.

| Time Window | Average Temperature (°C) | Vibration Std Deviation | Frequency Peak at 50Hz (Amplitude) | Condition (Label) |

|---|---|---|---|---|

| 10:00 | 45.2 | 0.8 | 0.12 | OK |

| 11:00 | 46.1 | 0.9 | 0.15 | OK |

| 12:00 | 46.5 | 1.1 | 0.18 | OK |

| 13:00 | 47.9 | 2.5 | 0.72 | Defect |

How this table works:

- Each row is an “observation,” i.e. a time window in which sensor data were collected.

- Each column is a “feature” derived from the raw data. These are the processed information pieces that the AI model needs.

- The column “Condition” or “Label” is what the model is to learn. In the training data, we tell the model which feature values corresponded to the machine being “OK” and which corresponded to it having a “Defect”.

An AI model only sees the numeric values in the “Average Temperature”, “Vibration Std Deviation”, etc. columns. It learns that a high standard deviation in vibration and a pronounced frequency peak at 50Hz correlate with a “Defect”. Based on these patterns, it can later predict when a similar condition will occur in new, unseen data.

The Brain of the System: Structure and Training of the AI Model

After the data have been carefully collected, prepared, and enriched with features, the core of a Predictive Maintenance system takes over: the machine learning model. At this point, the “brain” of the system plays the central role in learning from the prepared data to uncover hidden patterns and predict future failures. Choosing the right model is crucial, and the training process requires precision.

Choosing the Right Algorithm

A wide variety of machine learning algorithms can be applied to Predictive Maintenance, but some have proven particularly effective. The choice heavily depends on the type of data and the complexity of the problem. Classification algorithms are ideal when the goal is to classify a machine as “healthy” or “faulty.” Random Forest or Gradient Boosting are good choices here because they are robust and work well with tabular features. For very complex and dynamic data, such as those in time series, deep learning algorithms often excel. Long Short-Term Memory (LSTM) networks are ideal because they can understand temporal dependencies in the data. They “remember” patterns that develop over long periods, such as a gradually increasing vibration level. Autoencoders are also well suited because they learn a model of the “normal state” and identify any deviation as an anomaly.

The Training Process: Learning from Historical Data

Training an AI model is a systematic process in which the model analyzes a vast amount of historical data. The goal is to learn the hidden relationships between input features and the desired output. In practice, several main approaches are used.

Supervised Learning: Learning with Labels

This approach is used when historical data carry a clear label, such as “OK” for normal operation or “Defect” for a failure. The model is fed these labeled data and learns to distinguish the characteristics of a “good” state from those of a “bad” one. The prepared data are first split into three sets: training data, validation data, and test data. The bulk of the data is used to train the model. Validation data help prevent overfitting, where the model learns the training data too well but fails on new data. Test data are only used at the very end to evaluate the final performance of the fully trained model.

Unsupervised Learning: Learning without Labels

This approach is especially relevant for anomaly detection, as failure data are typically extremely rare. The model is trained exclusively on “normal state” data. It learns how the machine operates under normal conditions without ever seeing a fault. An Autoencoder is an example of such a model. It learns to reconstruct the input data as accurately as possible. When later faced with an anomaly it has never seen, it cannot reconstruct it correctly. The high reconstruction error then provides a clear signal of a deviation and thus a potential defect.

Hybrid Approaches

In practice, hybrid approaches are often used. Semi-supervised learning is particularly relevant because it mirrors real-world industrial conditions: there is a vast amount of unlabeled normal data (“OK”) but very little labeled failure data (“Defect”). Semi-supervised learning uses the large amount of unlabeled data to train a model that understands the structure and distribution of normal data. Then the few labeled data are used to refine the model’s ability to predict failures. This makes the system more robust and accurate. Another hybrid approach is reinforcement learning, which allows the model to learn through trial and error how to optimize a machine’s operating conditions to minimize wear and extend its lifespan.

Pretraining vs. Fine-Tuning

In the world of Artificial Intelligence, there are various methods to train and adapt models. The choice of method depends on the goal, the available data volume, and computational resources. The following sections describe the main approaches in ascending order of complexity and effort, from the simplest to the most demanding.

Zero-Shot Learning: Applying Without Training

Zero-Shot Learning is the simplest and least resource-intensive method. It does not involve traditional training. Instead, a pre-trained model is applied directly to a task without any fine-tuning with new data. The model uses its general knowledge to make a prediction. This is the quickest way, requiring no proprietary training data, but it is often less precise than fine-tuned models.

Example: A vendor provides a pre-trained time series anomaly detection model for pumps. A manufacturing company can apply this model immediately to its pump data to detect deviations without collecting its own failure data or adjusting the model.

Partial Fine-Tuning: Efficient Customization

Partial Fine-Tuning is an efficient method to adapt a pre-trained model. In this approach, the layers of the neural network that contain general domain knowledge (e.g. industrial machinery) are frozen. Only the task-specific layers are trained with new data. Compared to full fine-tuning, this method requires significantly less computational power and data. It represents a compromise between immediate applicability and complete customization.

Example: A company uses a base model trained on general vibration data from motors. Instead of adapting the entire model, it trains only the task-specific layers with data from a particular production line. This enables quick and cost-effective adaptation to new equipment.

Full Fine-Tuning: Complete Adaptation

Full Fine-Tuning is a resource-intensive form of customization. A pre-trained model is taken and the entire neural network is further trained with a new, machine-specific dataset. All model parameters are adjusted, which demands high computational power. Unlike pretraining, the process does not start from scratch. Instead, the general knowledge learned by the base model is refined for a specific application. Compared to partial fine-tuning, all layers of the model are updated.

Example: An energy company uses a pre-trained Time Series Foundation Model based on wind turbine data. With full fine-tuning, it adapts the model to the specific conditions, climate, and maintenance history of its own wind farm installations to maximize prediction accuracy.

Pretraining (Training from Scratch)

Pretraining is the most elaborate and computationally intensive method. A completely new AI model is trained from scratch on a very large, domain-specific dataset. The model starts with no prior knowledge and must learn all patterns and interrelationships from raw data itself. This is the most fundamental approach and differs from all others because it does not rely on existing models. It is the opposite of fine-tuning.

Example: A major machinery manufacturer with decades of historical data from thousands of its turbines trains a proprietary model. The goal is to create a model that possesses a deep, industry-specific understanding of turbine behavior without relying on general knowledge from external providers.

Conclusion

Predictive Maintenance and AI in IT Service are only at the beginning of their potential. The examples show that companies can already achieve significant advantages today through the use of intelligent methods. From early anomaly detection to the automation of service processes to precise predictions of remaining useful life of machines, the role of AI is becoming increasingly business-critical. The key is to use the right data sources, continuously improve models, and enable meaningful collaboration between humans and machines. This transforms reactive maintenance into a proactive and strategic tool that reduces costs, avoids downtime, and secures competitive advantages.