Introduction

With the advent of large language models (LLMs) such as GPT, many people wonder how to provide these models with structured, precise information. Although LLMs are capable of answering questions very convincingly, many of their answers are based solely on statistical language probabilities, not on logical inference or explicit factual knowledge. This is where the use of an ontology offers systematic added value.

In the following article, a fictional mission in the „Lord of the Rings“-Universe is used to demonstrate how an ontology can support an LLM in answering complex questions.

What is an Ontology?

An ontology is a formal model for the structured representation of knowledge. It is used to map real-world facts, contexts, and dependencies through concepts and their relationships. Technically, it is usually based on the RDF model (Resource Description Framework), where knowledge is represented in the form of triples: a subject, a predicate and an object. These triples form the basic unit for describing facts and relationships in a knowledge domain.

Instead of simply listing terms, an ontology models reality in its dependencies. It describes how entities relate to one another. For example, it can be recorded that a subject („Aragorn“) has a certain attribute („sword fighting“). This relationship is expressed via a predicate („hasSkill“). Aragorn, a main character from Lord of the Rings, is an experienced fighter with pronounced skills in sword fighting and leadership.

This structured knowledge can be used by machine systems such as an LLM (Large Language Model) to provide more precise answers, especially when the model does not rely on purely statistical probabilities but on explicitly modeled background knowledge.

Such triples can be stored in graph databases like Neo4j and analyzed and visualized with Python libraries like py2neo, rdflib or networkx.

Ontology Example:

Assume that we model an ontology with characters from Lord of the Rings. In this ontology are the following pieces of information:

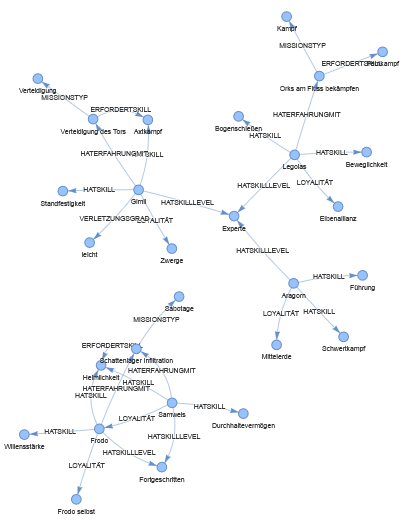

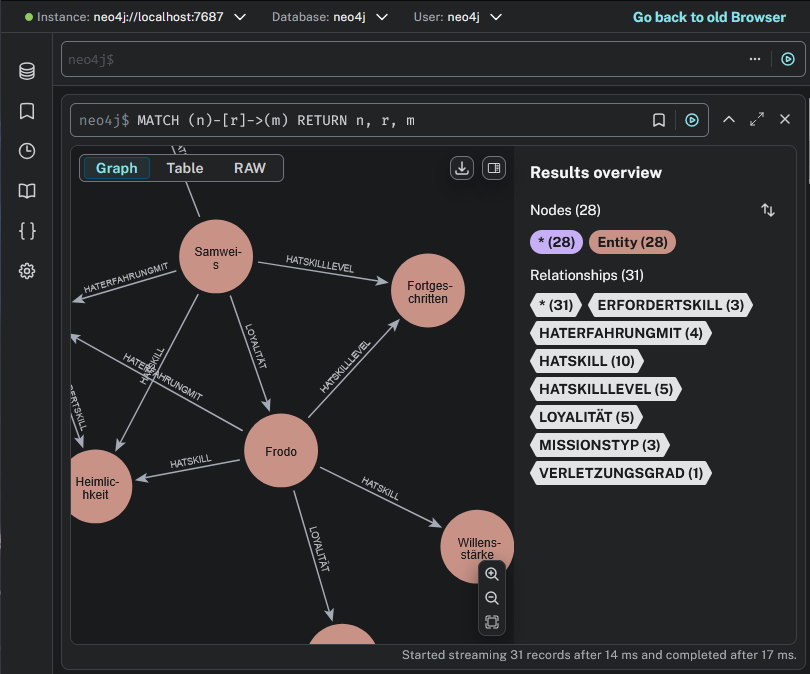

Visual representation of the above ontology:

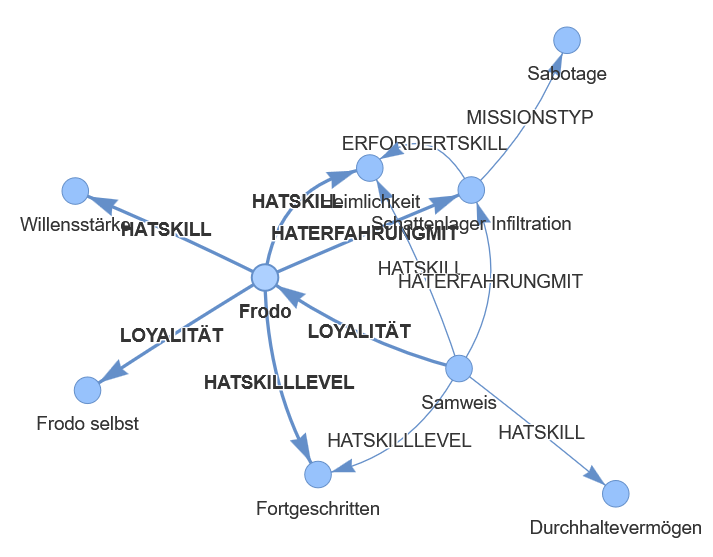

Frodo detail view:

What an Ontology Provides in Combination with an LLM?

Instead of simply asking an LLM an open-ended question (and thereby leaving it up to the model whether it resorts to hallucinations or fragmentary knowledge), one can direct the LLM to first consider structured knowledge from an ontology. This has several advantages:

Consistency

The LLM relies only on defined and verifiable facts.Restriction of the search space

The search for answers is purposefully guided.Explainability

Decisions can be traced through the underlying graph.Combination of logic and language

The ontology provides the facts, the LLM formulates them elegantly.

Example: A Mission in the Lord-of-the-Rings-Universe

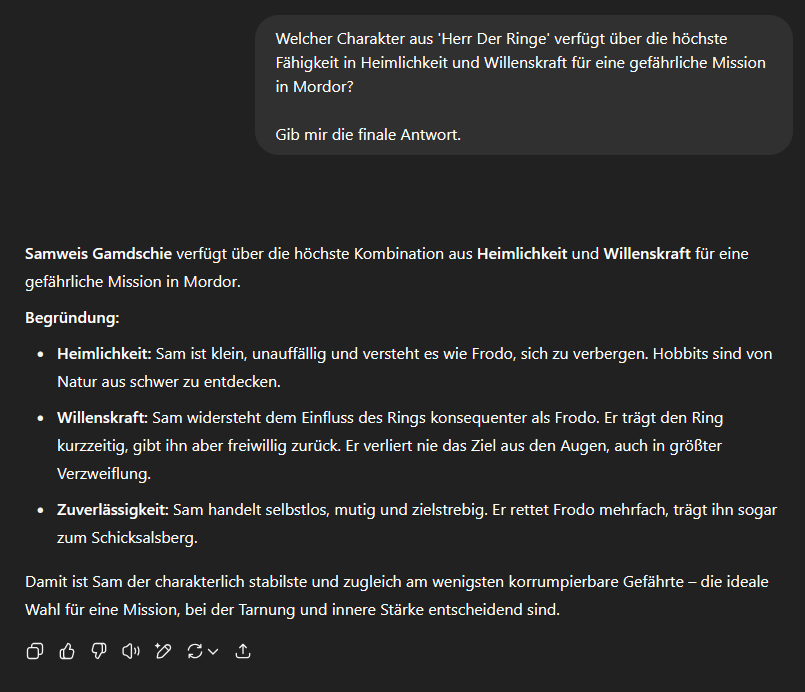

Assuming we have the following question and ask an LLM for the answer:

ChatGPT or the LLM’s answer to this question is as follows:

The example shown makes it clear how an LLM, in this case ChatGPT, delivers a substantively coherent answer, but does not necessarily respond correctly to a specific factual basis. The given answer is based on general world knowledge that the model learned during its training from a variety of publicly available sources. It thus reflects a probable viewpoint, but not necessarily the actual facts applicable in the current context.

At their core, LLMs operate on the basis of probabilities. They calculate which answer to an input appears statistically most likely, based on the language and knowledge space in which they were trained. In many cases, this results in plausible outcomes. However, as soon as the question targets domain-specific knowledge, such as internal expertise, project-related contexts, or specially curated facts, such models encounter limits without additional guidance.

This is where the added value of an ontology comes into play. An ontology makes it possible to model such specialized knowledge in a structured way, i.e., which entities exist, what properties they have and how they relate to one another. In an ontology, it can be defined exactly that Frodo possesses both the ability Stealth and the ability Willpower, whereas Samwise has Stealth but no Willpower. This knowledge is explicitly stored and machine-readable.

By integrating such a structured knowledge model into the LLM processing, the original probability distribution is purposefully influenced. The LLM no longer has to guess who might be suitable, but instead receives clear facts on the basis of which the answer is generated. The probability of a correct and specialized answer increases significantly because the model no longer relies exclusively on its generalized training knowledge, but also accesses specialized, context-specific information.

Thus, the ontology acts as a semantic guiding structure. It increases factual accuracy and forces the model to reason within a context of meaning. Especially in real-world application scenarios, such as within companies, medical systems or technical assistance solutions, this integration can make the difference between plausible and correct.

Extending Prompts with Ontology Knowledge for More Precise LLM Answer Generation

Before a question is sent to the language model, the original prompt is supplemented with structured knowledge from the ontology. This knowledge consists of triples that contain relevant information about the domain, for example about the properties of characters or relationships between concepts. By embedding these facts, the language model can not only rely on statistical patterns but also specifically access the provided specialized knowledge. This leads to better, explainable and domain-specific correct answers.

After extending the prompt with the metadata, we receive “Frodo” as the answer instead of, as before, “Samwise”.

In the current example, the entire ontology was embedded in the prompt to answer the question. All available facts were fully provided to the LLM to ensure that the answer is based exclusively on the modeled knowledge. This approach works in smaller examples, but is not suitable for real, extensive applications.

In professional environments, ontologies often contain tens of thousands or even millions of entries. It would be inefficient to transfer all information each time because

the model’s maximum input length could be exceeded

unnecessary information increases computation time

many irrelevant facts would be included in the prompt

Therefore, in practice, only a relevant excerpt from the ontology that fits the current question is used.

How a Meaningful Selection Works

An ontology is a structured model of subjects, predicates and objects. The selection of relevant information for answering a specific question is not carried out by statistical similarity or semantic guessing, but by structured traversal of the modeled relationships.

The key is to deliberately select from the ontology those subsets that are content-related in dependence on the question. No ML models are needed for this, but rule-based mechanisms:

Relationship-oriented navigation

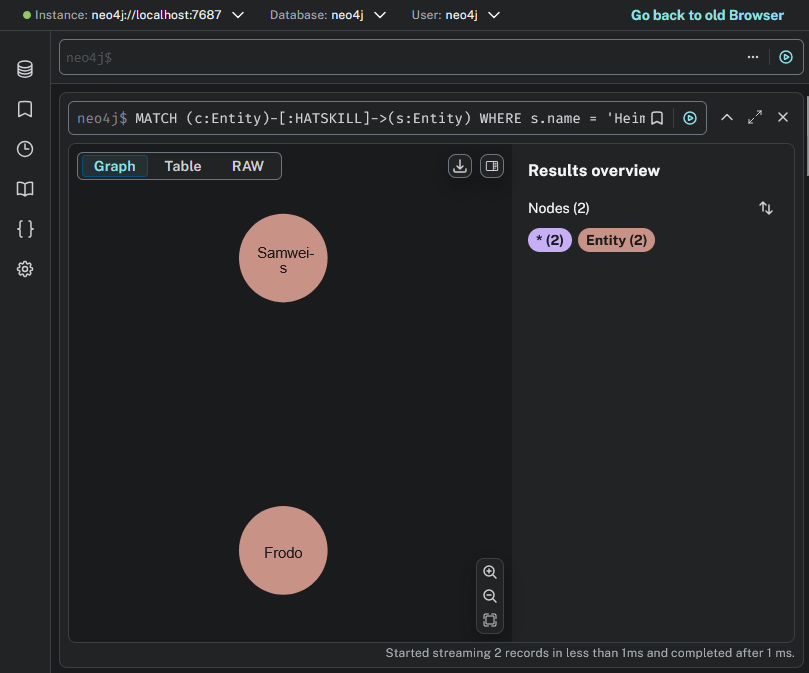

Through queries like „MATCH (c:Charakter)-[:HATSKILL]->(s:Skill) WHERE s.name = ‘Heimlichkeit’“ one can specifically find all entities that are connected to the searched concept.Path-based selection

It can be defined that only entities are considered that are linked to certain mission requirements over several edges, e.g. via „erfordertSkill“ and „hatSkill“.Filtering by attributes

By targeted filtering on node attributes like „hatSkillLevel = Experte“, the selection is further narrowed.Graph-oriented neighborhood analysis

One can use fixed rules to decide which neighboring nodes along defined predicates should be considered, for example to include experiences, loyalties or availabilities in the selection.

This form of selection uses the structure of the ontology, that is, its explicit edges and nodes, to extract exactly the knowledge elements that are necessary for the question.

An LLM only comes into play downstream to formulate a linguistic answer based on this compressed factual situation. Therefore, the quality of the answer depends substantially on the quality and relevance of the previously selected substructure of the ontology.

neo4j

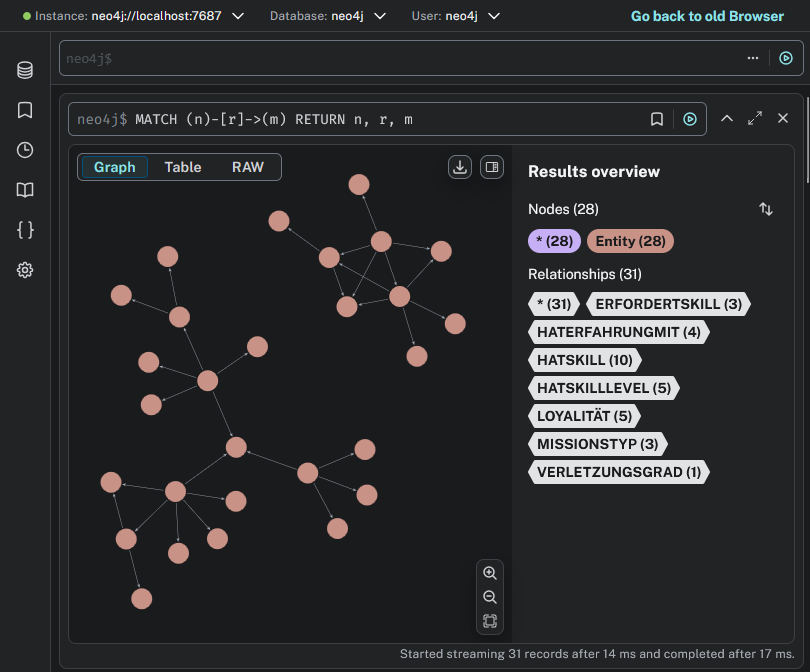

Neo4j is a graph database specifically designed to efficiently map and query relationships between data. Unlike classic relational databases, Neo4j stores information not in tables but in the form of nodes (Entities), edges (Relationships) and properties.

With Neo4j, complex, interconnected structures can be modeled and analyzed:

Ontologies and knowledge graphs (e.g. which person has which skill and which task requires it)

Social networks (e.g. who knows whom)

Recommendation systems (e.g. which products match user behavior

Fraud detection (e.g. unusual patterns in payment flows)

IT architectures (e.g. which systems are connected how)

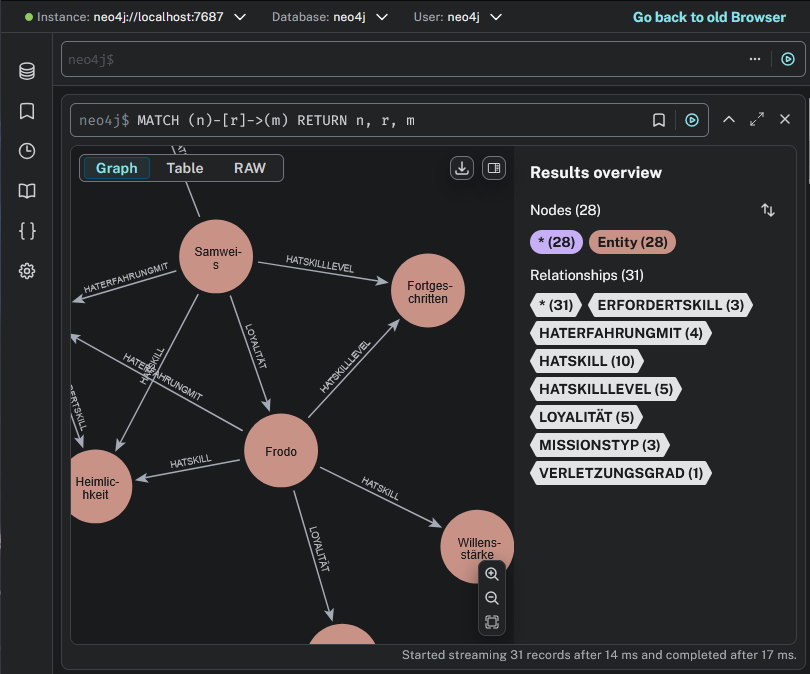

Our ontology looks like this within neo4j:

Installation via Docker

Navigation via neo4j

Find all characters (or generally: entities) that possess the skill „Heimlichkeit“.

With this query, we specifically consider only the slice of the ontology that is relevant to the question, in order to enrich the language model with appropriate metadata.

Contextual Reduction of the Ontology for LLM Queries

After the ontology has been specifically searched for relevant relationships, only the content-relevant dependencies can be passed to the language model as context instead of the complete ontology.

LLM Answer

Code Example

OWL (Web Ontology Language)

In the previous sections, we have seen how an ontology in the form of a graph with simple triples (subject-predicate-object) can drastically improve the quality of an LLM’s answers. We modeled entities like Frodo and Stealth and the relationship hasSkill between them.

This approach is already very powerful. However, our system so far only knows that a connection exists, but not what this connection logically means or which rules it is subject to. To close this gap and give our ontology a deeper level of “intelligence”, we use the Web Ontology Language (OWL).

OWL is a W3C-standardized format for creating comprehensive, formal ontologies. You can think of it as an extension of RDF (the framework on which our triple model is based).

If our existing graph provides the vocabulary and the simple sentences, then OWL is the grammar and the set of rules that describes the logical relationships between them. OWL allows us to define the meaning of the terms and relationships in our “Lord of the Rings” world so that a machine can not only read them but also understand and reason about them. Converting or extending our ontology with OWL would bring us several decisive advantages that would further significantly increase the quality of the facts passed to the LLM.

Problem today

In our graph, Frodo, Stealth and Shadow Lair Infiltration are all nodes of the same type (Entity). The system does not know that they are a character, an ability and a mission.Solution with OWL

We can define formal classes: Character, Ability and Mission. We can even create hierarchies, e.g. Hobbit and Elf could be subclasses of Character. Frodo would then be an instance of the class Hobbit.Advantage

The system can perform much more precise queries (“Find all Hobbits that have the ability ‘Stealth’”). Logical errors, such as assigning a loyalty to a mission, become impossible, and the context for the LLM is unmistakably clear.

By using OWL, we transform our existing knowledge graph from a pure data collection into a dynamic, logical model of our “Lord of the Rings” world. The facts we then pass to the LLM are not only more precise and context-rich, but can also include knowledge that was only generated through logical inference. This is the next decisive step in maximizing answer quality.

Ontology Example

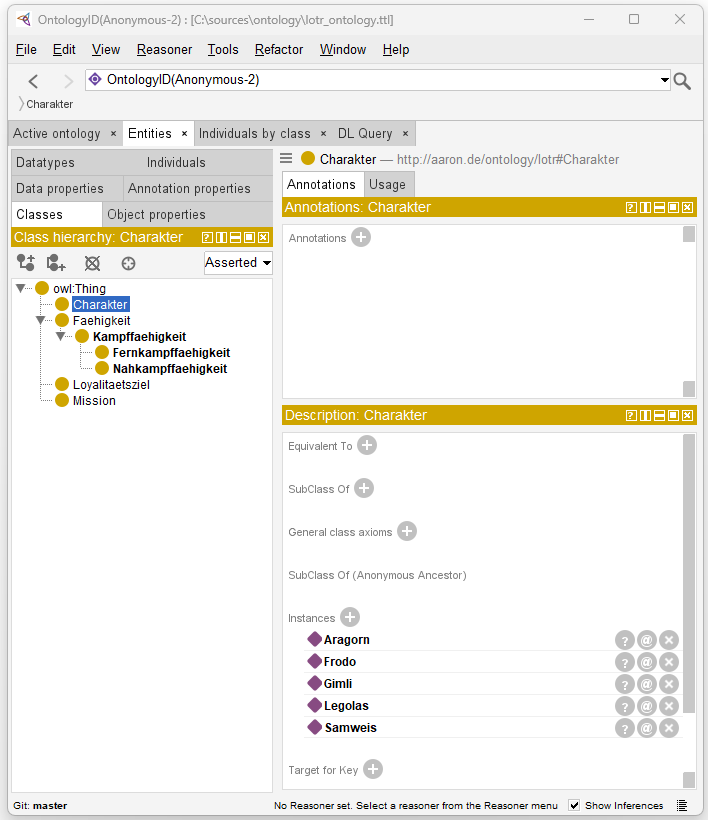

Visualization via Protegé

Protégé is a freely available open-source tool for creating and managing ontologies, for example in OWL. With Protégé, classes, properties and instances can be defined and related. It supports so-called reasoners, i.e. logical inference engines that allow consistency checks and make additional implicit relationships visible. Furthermore, the tool can be extended through plugins and also exists in a web-based version that enables collaborative work.